Putting DALL-E into Practice

“Any sufficiently advanced technology is indistinguishable from magic” - Arthur C. Clarke

We are living in a golden era of Artificial Intelligence technology where the advances we see are so impressive, they often look like magic!

Not long after OpenAI took the world by storm and unleashed GPT-3 (2020), one of the most powerful language models ever made, the company has done it again with the DALL-E 2 transformer machine learning model that uses the power of GPT-3 to generate images from descriptive text inputs. And as was (and is still) the case with GPT-3, entrepreneurs and innovators around the globe are now racing to figure out the most useful and most profitable applications of DALL-E, and turn them into products and solutions.

To be clear though, both language models like GPT-3 and image generation models like DALL-E 2 have existed for a while and computer scientists have been publishing papers about their efforts to build and improve them. What is really special here is the generativity or characteristics of the technology that are enabling distributed innovations on a massive scale:

First, these AI models are REALLY good and produce less noise and less poor results than previous iterations, thereby making them much more practically useful. The training data has been unprecedently large scale and the optimizations and tweaks done on the algorithm have been highly effective.

Second, these AI models have been made easily accessible and usable by non-specialists through no-code interfaces and low-code APIs. This allows people who know nothing about how the underlying computer science actually works to be able to employ the models, apply them to various problems, and make products with them.

For example, no-code entrepreneur Stephen Campbell used the GPT-3 API to summon its powers into a Bubble.io application, turned it into a product, and successfully sold it in a tiny acquisition. A detailed how-to is here and he also talks about it in this interview:

New alternatives to GPT-3 and DALL-E such as BLOOM and Midjourney are learning from the OpenAI benchmarks in terms of how to let the public interact with these tools.

Now unlike GPT-3, OpenAI has not yet provided API access to DALL-E so it is not technically possible to call DALL-E in other software applications or automate its use, although it is likely that API access will be made possible soon with a business model similar to GPT-3 where users are charged per API call.

I came very close to a workaround for automating DALL-E without API access through a powerful web scraper and browser automation tool called Automatio.co and I am sure others with more browser automation expertise and programming knowledge can achieve this. But for now, using DALL-E manually will be enough for me to explore what it can or can't do and discover potential opportunities to put this wonderful technology to use. Here are a few things I tried.

Getting Picasso to Draw Iranians

Maybe it was because of my Iranian heritage, but the first thing I thought to ask DALL-E was to create a portrait of an Iranian woman in the style of Pablo Picasso. I think the results were actually pretty stunning:

Interestingly, many people who saw these on my social media noticed that they were mostly sad. To compare, I asked it to paint Iranian men in the same style:

I guess they are not too happy either but maybe not as sad as the women. Overall though, I really like these images. Some of them truly feel like what Picasso may have painted if his subject was Iranian. And therein lies a key value of DALL-E: being able to apply different styles to any subject or idea, even if the originator of that style has passed away. It is like giving new life to the style of these great artists by creating new applications of it.

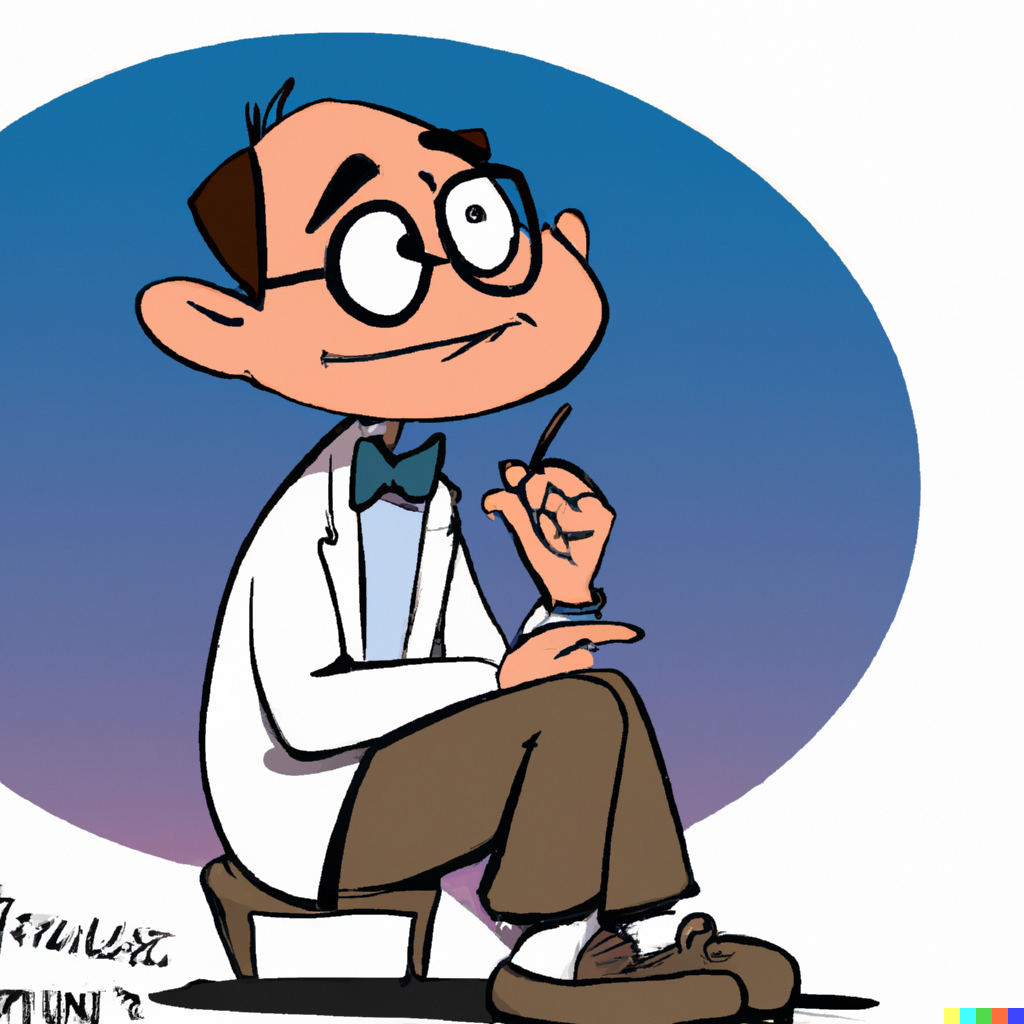

Getting DALL-E to Draw a Cartoon Portrait of Me

I wanted a cartoon portrait of myself sitting and thinking of ideas to use as a header graphic on the home page of this blog. I wondered if DALL-E could help me generate this, as commissioning someone to do it would probably cost around $200.

DALL-E does allow you to upload an image instead of giving it a text prompt, and it is able to create variations or edit the image with text prompts. However, when I tried uploading a picture of myself I was faced with an error message: to prevent potentially harmful use, DALL-E is not currently allowing uploads that include real faces. Fair enough.

OK, then, I thought perhaps if I give DALL-E enough detail about what I look like, it may be able to generate something that resembles me. For the most part, the results were pretty bad. Not only did they not resemble me, but often they were just bad art. They often looked like they were drawn by a novice:

Through trial and error I learned to use terms like Iranian man, Canadian man (because maybe I don't actually look like a stereotypical Iranian), professor, brown haired, glasses, happy and hopeful, inspired, thinking of ideas, etc. and I tried various cartoon styles that I knew of. After quite a few trial and error iterations and spending more OpenAI credits than I had budgeted, I finally arrived at something that looked OK because it resembled a photo I had of myself from a few years ago when I was in grad school:

I knew I wasn't going to get any closer than this! But still it is far from perfect. I can look past the slightly awkward eyes, but notice that weird shape inside the thought bubble? So I had to get to work, take it to photoshop, and add my own edits to it. While at it, I put a round white border around it too, to better suit the white background on this site.

The key lesson for me here was that generating images with AI for some applications is such a hit-and-miss trial-and-error process that even the best outcome you can create is still likely to be incomplete and will require human effort to take it to the finish line.

Getting DALL-E to Create Stock Images

Given the amount of difficulty I had coming up with the right prompts to get the results I wanted, I started to think that the process I am going through to develop this prompt engineering skill and understanding of how DALL-E works is a valuable skill, and others who don't have the time and resources to develop these skills may wish to purchase the outcomes of this expertise from others like me. So how do I "productize" my DALL-E prompt engineering skills?

One idea I thought of was to generate generic images that others could potentially purchase and use as stock images. Selling stock photos and images is already a huge industry so there may be demand to purchase AI-generated art and imagery. Just to give it a shot, I tried generating some images of groups of people working with computers:

Again, we see that it is not easy to get good images. Often, faces and eyes are disformed or otherwise something looks awkward. Another problem is that when you use generic terms like "groups of people" the majority of images produced have only white people in them.

But these results don't dissuade me. Actually, they make me further realize that creating really good outputs with AI is pretty hard, involves a lot of trial and error and learning, and costs both time and money. This means that it is a valuable skill and the outcome of it is a valuable product. If I can figure out ways to efficiently make good stock images with DALL-E, I may be able to turn it into a business. I'll explore this idea some more in future posts.

Lessons Learned

Here is a recap of some lessons I learned from playing with DALL-E:

DALL-E is Genius, but still Stupid

DALL-E has many limitations. It is well known that it has trouble with faces, and particularly eyes. It sometimes draws things that just don't make sense or seem out of place (and not in a creative artsy way). It takes as input a description in natural language which has its own limits and constraints, and even when you use the language properly it still frequently doesn't understand it right. It has trouble distinguishing characters you describe in different ways, and often combines those descriptions into characters in ways that are obviously not what you asked for. A couple of times in my trials, it generated images that were completely distant from and irrelevant to what I had asked for. But overall, it does frequently surprise you with pretty good results.

DALL-E Prompt Engineering is a Human Skill

Using DALL-E properly is definitely a skill, and requires a good amount of knowledge in the arts, in everything from the techniques of photography, the genres of art, the names of artists, and how to communicate these things effectively with DALL-E with descriptions that produce better outcomes. This is why DALL-E prompt engineering is going to become an important and in-demand category of skill in the job market, and why breadth-inclined artists, art majors, and creatives will have a head start.

An indication that AI prompt engineering is going to become a complex skill is that people are already compiling guides and books on it. As Guy Parsons writes in the DALL-E 2 Prompt Book:

DALL-E has not explicitly been 'taught' anything...it has just studied 650 million images & captions, and left to draw its own conclusions. That's why there can't be a regular 'manual', based on functionality that the developers intentionally programmed in – even the creators of DALL-E cannot be sure what DALL-E has or hasn't learned', or what it thinks different phrases mean. Instead, we have to 'discover' what DALL-E is capable of, and how it reacts.

This discovery is human work, and learning what others discover is going to be human work, and so there is going to be a lot of human work involved in producing good outcomes with AI.

DALL-E will NOT replace Human Artists

There are many reasons why AI models like DALL-E are not replacing human artists any time soon, and in fact giving them superpowers:

- As discussed above, human artists are best positioned to learn DALL-E prompt engineering, figure out its capabilities and learn how to communicate with it.

- Human artists are also best positioned to be able to fix DALL-E errors or complete DALL-E's work when it feels incomplete.

- Human artists are empowered by DALL-E because they can use it effectively as a tool to generate digital assets or components that they can then use in their own work.

- Human artists are needed for curation of AI-generated work. There is an important selection and curation skill needed in working with AI-generated art, to be able to understand which ones among various outputs are better in quality and artistic value. I said above that I think the Picasso portraits were pretty good, but someone better trained in the arts would know much better.

- DALL-E often produces something that you feel like has an "interesting" idea but is not executed properly, and that is where a human artist can take the idea as inspiration and produce a properly executed version.

- Even if DALL-E has executed the idea perfectly, it is not capable of "running with an idea" to extend it or apply it to other things. Most likely for DALL-E it is just a pattern that it just happened to produce once, but an artist will be much better capable of taking the idea further.

DALL-E has Unlocked New Contributions of History's Great Artists

What were the contributions of Da Vinci, Van Gogh, and Picasso to humanity? For sure, they are immense, and their great works will remain treasures of humanity forever. But DALL-E has now unlocked a new meta-level contribution that these great artists made to humanity: they taught us their styles, such that not just our artists, but now our computers can also apply their styles to new works of art in their absence. Every new image that DALL-E creates "in the style of Picasso" contains the real Picasso's contribution in his absence.

DALL-E Reflects our Dark Side too

When I asked it to draw Iranian women, it drew mostly sad women. When I asked it to draw groups of people, it drew mostly white people. This just reflects the biases that exist in the data that DALL-E had available to it for training. The programmers who make these AI models have to do a better job of de-biasing them when the underlying data has biases. In the mean time, trying to get DALL-E to fix these issues requires better, more specific prompts, and that kind of prompt engineering is a human skill.

Conclusion: Good Images Generated with DALL-E are Costly and Valuable

This was perhaps the most important realization. Because the knee-jerk reaction to seeing DALL-E for the first time is to think that this is going to result in the commoditization of art, and is going to replace artists. Furthermore, it is going to de-value art because now anyone can make a Picasso. Hundreds of them. Thousands or millions of them on autopilot!

I have learned that although there may be some truth to that concern, it is at best an incomplete picture of the changes that DALL-E and AI models like it will bring.

I have learned that there is a lot of human skill and effort required for getting good results from AI-generated art. It is tricky to get the AI to generate certain things if you can't come up with the right prompt. And there is no manual for how to give the AI prompts! It has to be "discovered." It is a trial and error process that is costly and time consuming.

Even when you have the right prompt, the outcome is sometimes messy, including messed up faces or eyes, or other things in the image that seem out of place or just don't seem right. So again resulting in costly trial and error.

I have learned that AI models never create the exact same image twice. So if a masterpiece is generated by accident and not saved, it could be lost. There is some scarcity value in selecting and saving the best outputs from AI models. But that requires knowledge, ability to discern quality, and the time, resources and effort that goes into such curation work.

I have also learned that DALL-E often produces creative or imaginative results, at least by human standards, because it has learned from patterns of human creativity and imagination. It is not technically correct to say that the AI is "being creative" but when it mimics creative patterns and produces results that make you go "wow," that's a pretty magical moment! For me, this image was an example of one of those wow moments:

Thank you for reading the second post of my journal on DigitVibe.com! Subscribe and follow my next steps working on entrepreneurial side projects. After reading this post you will not be surprised when I tell you that a soon upcoming post will be about my project building an online store to sell AI-generated stock images at AIGallery.cc.

Member discussion